The one thing everyone knows about quantum mechanics is its legendary weirdness, in which the basic tenets of the world it describes seem alien to the world we live in. Superposition, where things can be in two states simultaneously, a switch both on and off, a cat both dead and alive. Or entanglement, what Einstein called “spooky action-at-distance” in which objects are invisibly linked, even when separated by huge distances.

But weird or not, quantum theory is approaching a century old and has found many applications in daily life. As John von Neumann once said: “You don’t understand quantum mechanics, you just get used to it.” Much of electronics is based on quantum physics, and the application of quantum theory to computing could open up huge possibilities for the complex calculations and data processing we see today.

Imagine a computer processor able to harness super-position, to calculate the result of an arbitrarily large number of permutations of a complex problem simultaneously. Imagine how entanglement could be used to allow systems on different sides of the world to be linked and their efforts combined, despite their physical separation. Quantum computing has immense potential, making light work of some of the most difficult tasks, such as simulating the body’s response to drugs, predicting weather patterns or analysing big data sets.

Such processing possibilities are needed. The first transistors could only just be held in the hand, while today they measure just 14nm — 500 times smaller than a red blood cell. This relentless shrinking, predicted by Intel founder Gordon Moore as Moore’s law, has held true for 50 years, but cannot hold indefinitely. Silicon can only be shrunk so far, and if we are to continue benefiting from the performance gains we have become used to, we need a different approach.

Quantum fabrication

Advances in semiconductor fabrication have made it possible to mass-produce quantum-scale semiconductors — electronic circuits that exhibit quantum effects such as super-position and entanglement.

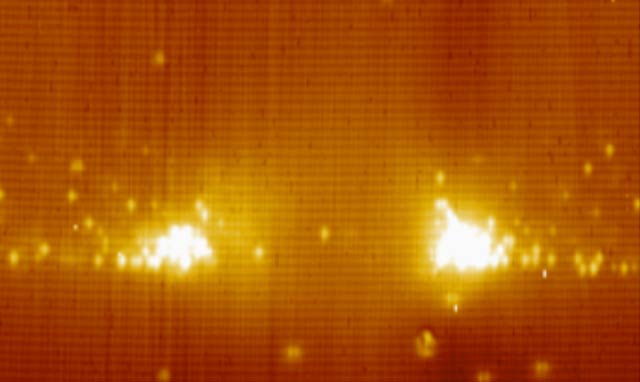

The image at the top of this article, captured at the atomic scale, shows a cross-section through one potential candidate for the building blocks of a quantum computer, a semiconductor nano-ring. Electrons trapped in these rings exhibit the strange properties of quantum mechanics, and semiconductor fabrication processes are poised to integrate these elements required to build a quantum computer. While we may be able to construct a quantum computer using structures like these, there are still major challenges involved.

In a classical computer processor, a huge number of transistors interact conditionally and predictably with one another. But quantum behaviour is highly fragile; for example, under quantum physics, even measuring the state of the system such as checking whether the switch is on or off, actually changes what is being observed. Conducting an orchestra of quantum systems to produce useful output that couldn’t easily by handled by a classical computer is extremely difficult.

But there have been huge investments: the UK government announced £270m in funding for quantum technologies in 2014, for example, and the likes of Google, Nasa and Lockheed Martin are also working in the field. It’s difficult to predict the pace of progress, but a useful quantum computer could be 10 years away.

The basic element of quantum computing is known as a qubit, the quantum equivalent to the bits used in traditional computers. To date, scientists have harnessed quantum systems to represent qubits in many different ways, ranging from defects in diamonds, to semiconductor nano-structures or tiny superconducting circuits. Each of these has its own advantages and disadvantages, but none yet has met all the requirements for a quantum computer, known as the DiVincenzo Criteria.

The most impressive progress has come from D-Wave Systems, a firm that has managed to pack hundreds of qubits on to a small chip similar in appearance to a traditional processor.

Quantum secrets

The benefits of harnessing quantum technologies aren’t limited to computing, however. Whether or not quantum computing will extend or augment digital computing, the same quantum effects can be harnessed for other means. The most mature example is quantum communications.

Quantum physics has been proposed as a means to prevent forgery of valuable objects, such as a banknote or diamond. Here, the unusual negative rules embedded within quantum physics prove useful; perfect copies of unknown states cannot be made and measurements change the systems they are measuring. These two limitations are combined in this quantum anti-counterfeiting scheme, making it impossible to copy the identity of the object they are stored in.

The concept of quantum money is, unfortunately, highly impractical, but the same idea has been successfully extended to communications. The idea is straightforward: the act of measuring quantum super-position states alters what you try to measure, so it’s possible to detect the presence of an eavesdropper making such measurements. With the correct protocol, such as BB84, it is possible to communicate privately, with that privacy guaranteed by fundamental laws of physics.

Quantum communication systems are commercially available today from firms such as Toshiba and ID Quantique. While the implementation is clunky and expensive now it will become more streamlined and miniaturised, just as transistors have miniaturised over the last 60 years.

Improvements to nanoscale fabrication techniques will greatly accelerate the development of quantum-based technologies. And while useful quantum computing still appears to be some way off, it’s future is very exciting indeed.![]()

- Robert Young is research fellow and lecturer at Lancaster University

- This article was originally published on The Conversation