In the ongoing discussion around artificial intelligence, the conversation often centres on efficiency, automation and replacing human labour.

Yet research by neuroscientist and AI expert Vivienne Ming challenges this assumption: the true value of AI lies not in outsourcing work to machines, but in deep human-AI collaboration.

When humans and AI work together, when we co-create, the outcomes are consistently more innovative, persuasive and transformative than work produced by either alone, she said.

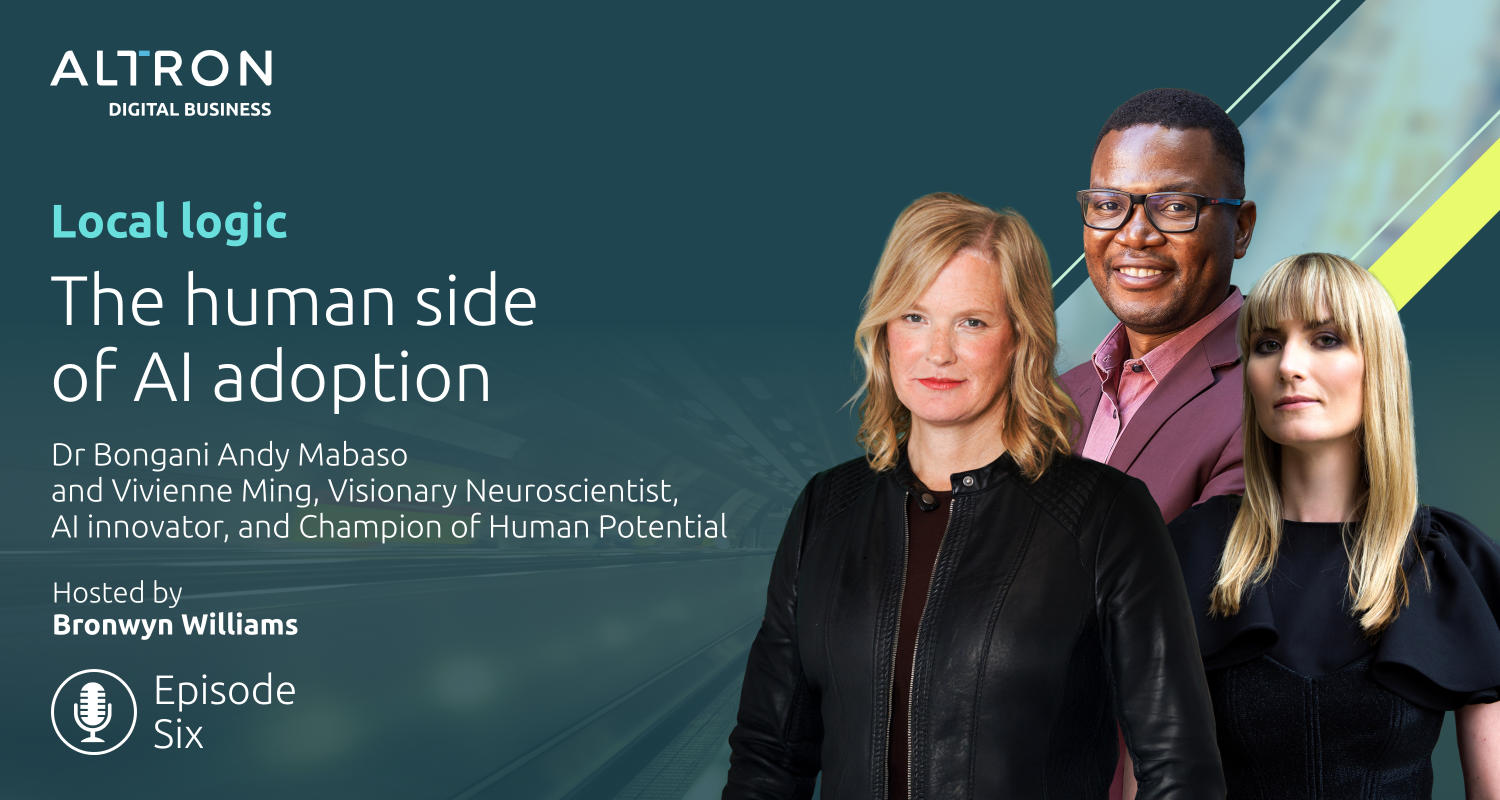

This perspective is central to Altron’s Local Logic podcast series, where technology leaders discuss how South African businesses can use AI, data and digital innovation to create value for their customers. Local Logic explores topics such as enterprise AI, innovation frameworks and data-driven decision-making, featuring guests including corporate CTOs and technology entrepreneurs.

Ming’s research makes one point abundantly clear: AI should augment human intelligence, not replace it. “My only interest paradoxically is how we make better people. In the end, for me, it’s always just about people. You want better AI? Build better people.”

AI as augmentation, not replacement

Yet research from Anthropic reveals a troubling gap between potential and practice: only about 5% of university students use AI tools like Claude for evaluation and critique, suggesting that just 5% are truly co-creating. The remaining 95% are using AI to outsource rather than collaborate.

This distinction matters because the temptation to outsource is built on a flawed premise. “If the machines do all the boring stuff, you get more boring stuff,” Ming warned. “If AI reads and writes all of your e-mails, you get more e-mails, not fewer. And we need machines that challenge us to be better ourselves.”

The stakes extend beyond productivity metrics. “What is transformative?” Ming asked.

Listen to the podcast series here

“Where, instead of marketing slop, do we get cures for cancer? And that comes from deep, effortful engagement, this constant engagement.”

When humans co-create with AI, the results are more persuasive and innovative. For instance, studies show that essays and ideas co-created by humans (rather than fully machine generated) are more convincing to third-party audiences.

Similarly, a study by Procter & Gamble found co-created ideas were “more likely to receive awards and actually be picked up for development”. AI-generated content may improve grammar and mechanics, but when the human is taken out, what it almost never does is take you to a new, transformative place.

Similarly, a study by Procter & Gamble found co-created ideas were “more likely to receive awards and actually be picked up for development”. AI-generated content may improve grammar and mechanics, but when the human is taken out, what it almost never does is take you to a new, transformative place.

Throughout the series, Local Logic guests have reinforced this idea. During episode 1, Bongani Andy Mabaso, chief technology officer of Altron Group, said: “We believe AI adoption is about empowering people. If we can empower people to do more, be more productive, then you can have higher targets.”

Episode 2, with Lee Naik, CEO of TransUnion South Africa, framed AI as “more of a partner versus competition”, like Ming, emphasising collaboration over replacement.

For Mabaso, this partnership has changed how he approaches his work. “I actually feel more challenged when I’m using AI, and I can achieve a lot more. I almost feel like it’s my own person. I have so many ideas, and I feel like I don’t have enough time to do all of them. And I feel like this thing helps me to move a little bit faster. So, I’m not actually doing less challenging work, actually, I’m taking on more challenging things.”

Yet there’s a cautionary note: outsourcing thinking entirely to AI is a common failure mode. As Mabaso warned, a common issue in businesses is when employees rely entirely on AI to do the thinking for them and present it as their own work. True value arises when people stay actively involved, using AI as a partner for co-creation rather than a shortcut.

‘Well-posed problems’

AI is very good at what Ming termed “well-posed problems”, where the answer to the question is known. Computers can analyse information, forecast results and optimise a solution when the parameters are well understood. However, humans are essential when it comes to “ill-posed problems” or when the question and the answer are not clear.

“Machines are fundamentally better at answering well-posed problems, while humans excel at ill-posed problems (where we don’t even know the question),” Ming explained. “Everything interesting about the world involves ill-posed problems. And right now, that’s where we need humans to be.” Examples include patient care, complex contracts or new product development: contexts where every situation is unique and unpredictable.

Education and organisational design often focus on well-posed tasks, she added. “We need our education system to guide children towards being able to engage with uncertainty and explore the unknown. And yet we’re still asking them to answer on demand a bunch of already known things on a sheet of paper.”

Read: Breaking down the data silos: why single views require collaboration

In other words, organisations risk underutilising human resources when they treat every problem as predictable. Co-creation ensures that AI handles structured tasks while humans navigate ambiguity and innovation.

Finally, Ming stressed that ethical AI adoption depends on cultivating courage and human agency within organisations. “Ethics isn’t about checklists but about owning the whole problem and understanding the consequences of technology deployment, starting with asking why repeatedly until you truly understand the problem you’re trying to solve.”

Humans must remain accountable for outcomes. AI can assist, but it cannot bear responsibility. Organisations should design AI systems to empower employees to own decisions and consequences, rather than outsource accountability.

Humans must remain accountable for outcomes. AI can assist, but it cannot bear responsibility. Organisations should design AI systems to empower employees to own decisions and consequences, rather than outsource accountability.

Experiments demonstrate the stakes: highly educated executives could be induced to act against their moral judgment under pressure, later rationalising their choices. Meanwhile, about 11% of employees accounted for 80% of “untracked productivity” by helping others, motivated by a sense of purpose beyond themselves.

Listen to the podcast series here

“Courage is something you practice,” Ming stressed. “If you’re not practising being courageous, making hard choices when it’s easy, I promise you, you will not be who you thought you were when the choices are hard.” Local Logic discussions reinforce this, with Mabaso noted “I would say to not be afraid to fail, you will have very predictable people doing the thing that was done last year”, if fear dominates the organisational culture.

Training, rewards and storytelling can nurture courage, preparing organisations to make ethical, high-stakes decisions in the age of AI. Co-creation is not just a productivity tool, it is a practice in responsible, courageous and human-centred AI adoption.

AI as augmentation

AI is most valuable when humans and machines collaborate, tackling complex, ill-posed problems and amplifying creativity. It is the organisations that see AI as an augmentation tool and not a replacement, that take ethics as a responsibility, and that instil courage in their employees, that will be able to unlock the transformative power of AI.

When humans co-create with AI, they not only become more productive; they also become better thinkers, innovators and decision-makers.

Listen to the podcast series here

- Read more articles by Altron Digital Business on TechCentral

- This promoted content was paid for by the party concerned