I have been idly building the specs my next desktop PC – a Linux gaming and AI rig – for the better part of the past year. It is starting to feel like a doomed exercise.

The plan was modest enough: enough memory to run a decent open-weight large language model locally – up to 128GB – a current-generation CPU with a respectable thread count and a GPU that wouldn’t embarrass itself when asked to load a 70 billion-parameter model into VRAM.

There’s just one problem. Building a rig like that today can cost as much as a second-hand car. The reason, in a phrase, is that hyperscalers have eaten the consumer hardware market alive.

Reuters reported this week that Korean memory maker SK Hynix is being aggressively courted by global tech firms offering to invest directly in its production lines. One source described the chip maker’s available production capacity as “essentially zero”.

SK Hynix’s shares are up roughly 154% this year. Microsoft alone has guided the market towards $190-billion in capital expenditure in 2026, of which an estimated $25-billion the company has attributed to rising chip and component costs. Meta Platforms, Google and Amazon are spending similarly eye-watering amounts. Now the little guy can’t catch a break.

Hey, big spenders

The scale of the commitment is starting to show on the hyperscalers’ own balance sheets. The Financial Times reported on Friday (paywall) that combined free cash flow at Amazon, Google parent Alphabet, Microsoft and Meta is forecast to fall to roughly $4-billion in the third quarter – down from a post-pandemic quarterly average of $45-billion – with full-year free cash flow on track for its lowest level since 2014, a year when the four had combined revenue around a seventh of today’s.

Amazon is expected to invest $200-billion in 2026 and burn cash for the year; Microsoft will burn cash in at least one quarter, Meta in the second half, and Alphabet’s full-year free cash flow will hit its lowest in more than a decade. Both Alphabet and Meta have paused share buybacks – Alphabet’s first such pause since 2015 – and have between them issued more than $100-billion of new debt in recent months to keep the build-out funded. Combined, the four are committing roughly $725-billion to AI infrastructure this year, up from $600-billion just a few months ago.

As a result, memory chip makers – principally SK Hynix, Samsung Electronics and Micron Technology – have reallocated wafer capacity away from commodity DDR5 and consumer DRAM towards the high-margin, high-bandwidth memory used in AI accelerators: HBM3e in current-generation Nvidia and AMD parts, with HBM4 now ramping for next-generation silicon including Nvidia’s Rubin platform.

The result has been described, only half-jokingly, as the “RAMpocalypse”. DRAM prices rose by roughly 172% in 2025. HP told investors on its most recent earnings call that memory now accounts for 35% of the bill of materials in a PC, up from 15% to 18% the previous quarter.

A 32GB DDR5 kit that sold for under $90 a year ago will set you back as musch as $530 today. The 128GB-plus configurations I would actually need to run a useful local LLM are starting to demand the sort of money that might require a discussion with a bank manager.

For South African buyers, the picture is bleaker. As TechCentral reported in March, local retailers Evetech, Dreamware Technology and Tech.co.za were already describing eye-watering price moves: DDR5 up by as much as 230% in a single quarter, DDR4 modules up 150% to 200%, SSDs up 35% to 50%. The picture has worsened since then.

Whatever cushion South Africans might have hoped for from a firming rand has been wiped out by the global crunch, and the country sits well down the global allocation queue regardless of what the currency does on a given day.

Graphics cards have been worse for longer. Nvidia’s GeForce RTX 5090 launched in 2025 at US$1 999. Custom variants are now retailing for $3 000 or more. Production of the 5090 and 5080 has reportedly been cut by 30-40% as Nvidia diverts GDDR7 memory and packaging capacity to its data centre business, which now accounts for the overwhelming majority of group revenue. A single high-end AI accelerator can sell for around $40 000; a flagship gaming card cannot. Nvidia would be derelict in its duty to shareholders if it did anything else. Still, it hurts – and gaming YouTubers are spilling bile about the company because of it.

The CPU is next

What’s now threatening to make an awful situation even worse is what is happening to CPUs.

For most of the past two years, the AI boom was a GPU and memory story. CPUs were not where the real workloads ran and prices remained relatively sane. That narrative is now flipping. Agentic AI – the move from chat-style interactions towards autonomous software agents that plan, call tools and execute multi-step tasks – turns out to lean on CPUs more than GPUs.

AI agents need exactly the mixed-throughput where CPUs excel. Even chat inference, while GPU-heavy on the token generation side, requires significant CPU resources for orchestration. Scale that across the volumes the hyperscalers are now planning to deploy these chips, and the CPU side of the data centre is becoming the new bottleneck.

Nowhere has this shift been more visible than at Intel, which a year ago looked like a candidate for a strategic break-up. The share price has more than quintupled since, briefly touching $114 this week and lifting the company’s market value past $570-billion.

Intel’s long-promised 18A process node – a technical return to form for a company that had fallen behind its main rival, Taiwan’s TSMC – is now in volume production at its new Arizona fabrication plant. The first commercial Panther Lake processors built on 18A shipped earlier this year and are getting good reviews in the technical press.

More significant still, Apple, which dropped Intel as a silicon supplier in late 2023, is reportedly considering shifting some chip production back to the company.

For Intel, this is vindication. For the rest of us, it is potentially terrible news. Capacity that might once have been pointed at the consumer market is being committed to data centre and hyperscaler customers years in advance. AMD has its own pricing power problem and every incentive to hold the line. Its share price has accelerated rapidly, too, in recent months.

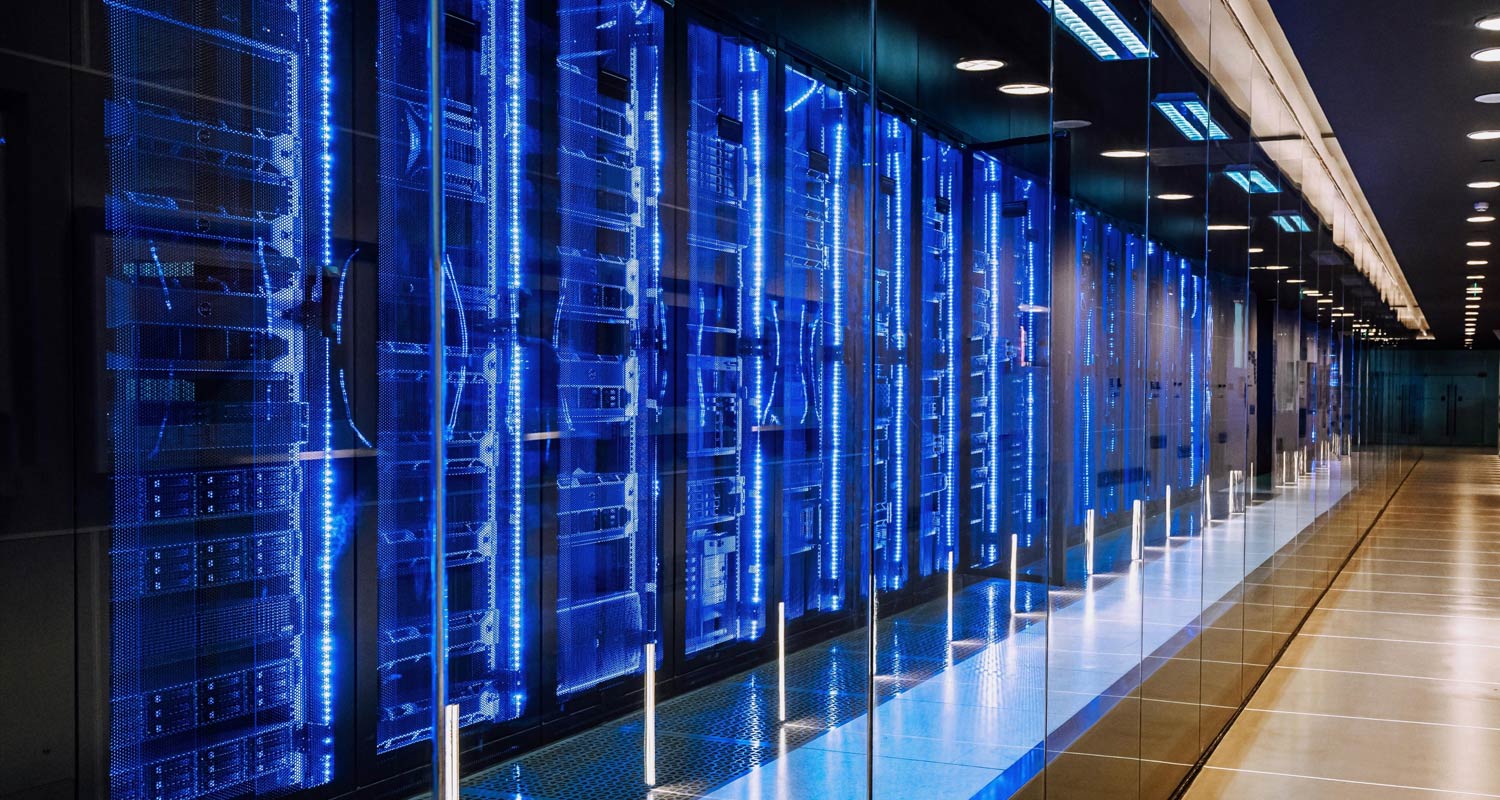

What ties the threads together is that the memory shortage and the CPU squeeze have a common cause: the entire global semiconductor supply chain is now optimising for a small number of very large customers building data centres. And there is no relief in sight – AI infrastructure may yet turn out to be one of the biggest speculative bubbles in history, but there’s no reason to believe that it’s going to burst anytime soon.

So, technology hobbyists like me, who would most like to play with AI models on our own machines and enjoy powerful hardware to play the latest games, are faced with a vexing problem. The economics of on-device computing have, for the first time in the PC’s long history, been broken by the data centre. And there is no obvious fix. — (c) 2026 NewsCentral Media

- Duncan McLeod is editor of TechCentral

Get breaking news from TechCentral on WhatsApp. Sign up here.