Jack Ma-backed Ant Group used Chinese-made chips to develop techniques for training AI models that would cut costs by 20%, according to people familiar with the matter.

Ant used domestic chips, including from affiliate Alibaba Group and Huawei Technologies, to train models using the so-called Mixture of Experts machine learning approach, the people said.

It got results similar to those from Nvidia chips like the H800, they said, asking not to be named as the information isn’t public. Ant is still using Nvidia for AI development but is now relying mostly on alternatives including from AMD and Chinese chips for its latest models, one of the people said.

The models mark Ant’s entry into a race between Chinese and US companies that’s accelerated since DeepSeek demonstrated how capable models can be trained for far less than the billions invested by OpenAI and Google. It underscores how Chinese companies are trying to use local alternatives to the most advanced Nvidia semiconductors. While not the most advanced, the H800 is a relatively powerful processor and currently barred by the US from China.

The company published a research paper this month that claimed its models at times outperformed Meta Platforms in certain benchmarks. If they work as advertised, Ant’s platforms could mark another step forward for Chinese AI development by slashing the cost of inferencing or supporting AI services.

‘Without premium GPUs’

As companies pour significant money into AI, MoE models have emerged as a popular option, gaining recognition for their use by Google and Hangzhou start-up DeepSeek, among others. That technique divides tasks into smaller sets of data, very much like having a team of specialists who each focus on a segment of a job, making the process more efficient. Ant declined to comment.

However, the training of MoE models typically relies on high-performing chips like the graphics processing units Nvidia sells. The cost has to date been prohibitive for many small firms and limited broader adoption. Ant has been working on ways to train LLMs more efficiently and eliminate that constraint. Its paper title makes that clear, as the company sets the goal to scale a model “without premium GPUs”.

Read: OpenAI study finds links between ChatGPT use and loneliness

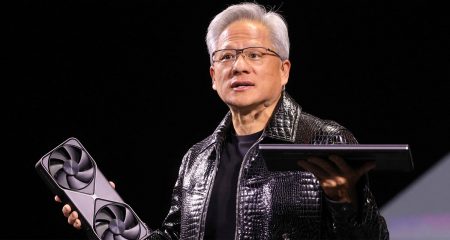

That goes against the grain of Nvidia. CEO Jensen Huang has argued that computation demand will grow even with the advent of more efficient models like DeepSeek’s R1, positing that companies will need better chips to generate more revenue, not cheaper ones to cut costs. He’s stuck to a strategy of building big GPUs with more processing cores, transistors and increased memory capacity.

Ant said it cost about C¥6.35 million yuan (R16-million) to train one trillion tokens using high-performance hardware, but its optimised approach would cut that down to C¥5.1-million using lower-specification hardware. Tokens are the units of information that a model ingests in order to learn about the world and deliver useful responses to user queries.

The company plans to leverage the recent breakthrough in the large language models it has developed, Ling-Plus and Ling-Lite, for industrial AI solutions including health care and finance, the people said.

The company plans to leverage the recent breakthrough in the large language models it has developed, Ling-Plus and Ling-Lite, for industrial AI solutions including health care and finance, the people said.

Ant bought Chinese online platform Haodf.com this year to beef up its AI services in health care. It also has an AI “life assistant” app called Zhixiaobao and a financial advisory AI service Maxiaocai.

On English-language understanding, Ant said in its paper that the Ling-Lite model did better in a key benchmark compared with one of Meta’s Llama models. Both Ling-Lite and Ling-Plus models outperformed DeepSeek’s equivalents on Chinese-language benchmarks.

“If you find one point of attack to beat the world’s best kung fu master, you can still say you beat them, which is why real-world application is important,” said Robin Yu, chief technology officer of Beijing-based AI solution provider Shengshang Tech.

Ant has made the Ling models open source. Ling-Lite contains 16.8 billion parameters, which are the adjustable settings that work like knobs and dials to direct the model’s performance. Ling-Plus has 290 billion parameters, which is considered relatively large in the realm of language models. For comparison, experts estimate that ChatGPT’s GPT-4.5 has 1.8 trillion parameters, according to the MIT Technology Review. DeepSeek-R1 has 671 billion.

Ant faced challenges in some areas of the training, including stability. Even small changes in the hardware or the model’s structure led to problems, including jumps in the models’ error rate, it said in the paper. — Lulu Yilun Chen, with Debby Wu, (c) 2025 Bloomberg LP

Get breaking news from TechCentral on WhatsApp. Sign up here

Don’t miss:

Tesla is flailing in China – and the rapid rise of BYD is to blame